Remote Access Windows Computing (Terminal Servers)

CSDE maintains Microsoft Windows Servers for general use computing through remote access. These permit anyone with a CSDE Windows Network account to sign into our file servers, access datasets, and run statistical software from anywhere in the world on a familiar Windows desktop environment.

If you don’t yet have a CSDE Computing Account, please request one here.

The Terminal Servers are rebooted every Thursday night/Friday morning from 3:00 AM to 5:30 AM PST while we apply updates. They will not be available during this time.

Additionally, please save any working files you have to your H: Drive when working on the Terminal Servers to ensure data accessibility.

See here for a complete tutorial on accessing the CSDE Windows terminal servers, including links for download and use of Husky OnNet.

There are currently four general-purpose CSDE Windows terminal servers available:

For student users only:

- csde-ts4.csde.washington.edu

- csde-ts5.csde.washington.edu

For faculty/staff/off-site users:

- csde-ts1.csde.washington.edu

- csde-ts2.csde.washington.edu

Using servers csde-TS4.csde.washington.edu and csde-TS5.csde.washington.edu is slightly different than using servers TS1/2…

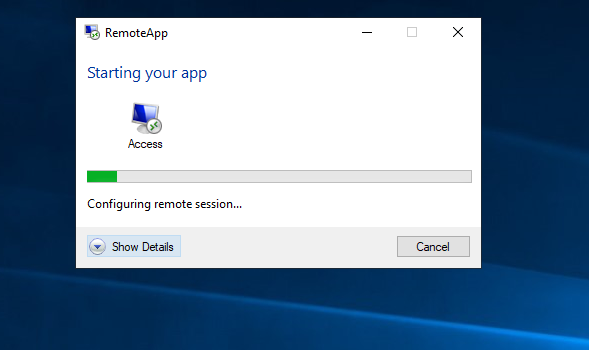

All available applications can be found by opening the folder “Applications” on the desktop. To launch an application, simply click on the shortcut icon (e.g. SPSS 23). After clicking the shortcut icon, you will see a little pop-up window like like the following image.

At the same time, a small window will also appear at the lower right-hand corner of the desktop windows

After a couple of minutes, you should be able to see the application window opens on the screen.

**** Please notice that the first time you click and launch an application, it may take a bit longer. Please be patient, the time to open will improve after that. If an application is not launched after you double click, please double click it again to relaunch it. Another trick is to click on another application shortcut which will speed up the appearance of the program. ****

You can also launch an application by clicking the “Start” button at the lower left-hand corner and look for the application on the list of Programs.

**** If your program is stuck or frozen, you can terminate it by using Task Manager. To do that click “Start” at the lower left-hand corner and look for the application “Task Manager (remote)” to launch. When Task Manager window is open, search the frozen application under the “User” tab to end the task. ****

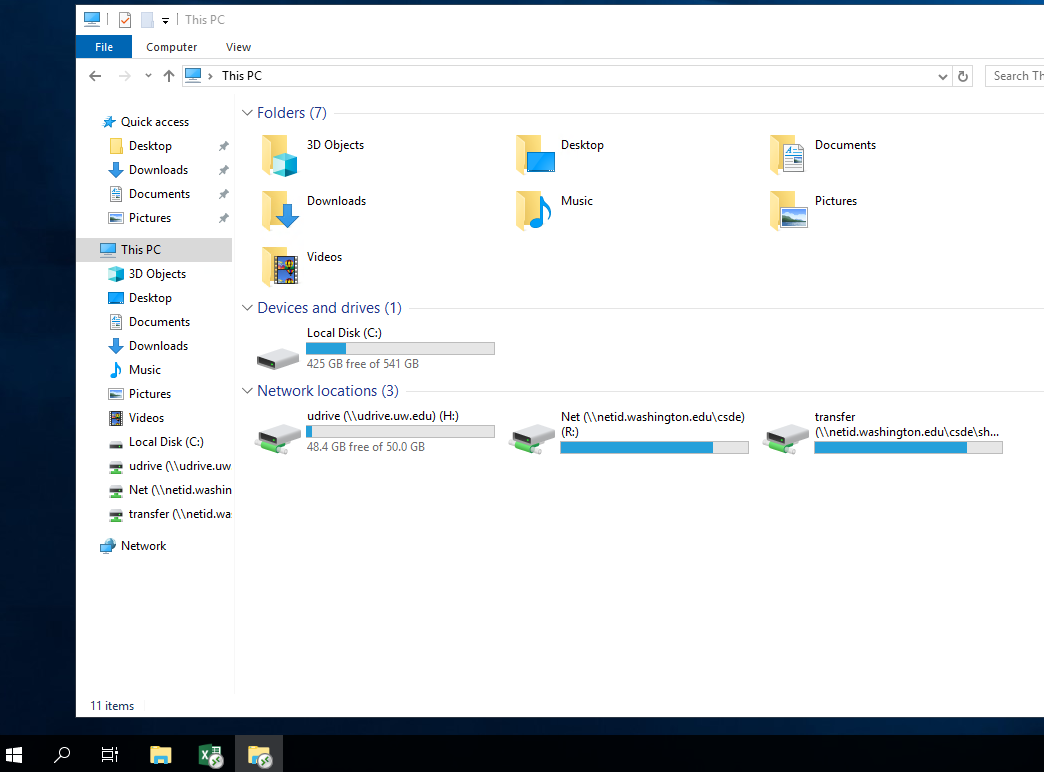

To look for files in your H: R: and T: drives, you must first click to open the shortcut icon “This PC” on the desktop of your remote desktop session window. Please be aware that this is the best possible way to locate your files.

To avoid any unnecessary loss of data, please make sure to save your work frequently and do not leave work unsaved if you disconnect from the remote desktop server.

Users should always save their files on their H: drive which is the same as their UW UDrive. The server is setup to redirect Documents folder, Downloads folder, as well as the Desktop to be included in the user’s UW UDrive.

The following image shows what you will see when you click on the “This PC” shortcut icon. Notice that the icons on the taskbar look slightly different than normal icons since they are programs running on a remote server.

These servers carry a full complement of ready-to-use statistical and social-sciences software packages. The terminal servers have nearly identical software packages installed and provide access to all your personal and project files. All our servers are Dell R6525 PowerEdge servers running Microsoft Windows Server 2022 Operating System. Each has Dual 48-core AMD 7413 processors with more than 1TB of system memory.

Please send your questions to csde_help@uw.edu

There are five drives available for use on CSDE terminal servers. Once logged onto a terminal server, they can be found in the My Computer menu.

| Windows Drive Letter | Use for | Backed Up | Private | Shareable with others |

| H: | The H: drive is your home directory, where personal files can be saved. It can only be accessed by you and is backed up regularly. |

|

|

|

| O: | The O: drive is for CSDE administrative documents and is only available to CSDE personnel. |

|

|

|

| R: | The R: drive is for storage of project and data sets. Multiple users working on the same project can have read/write access to files/folders. |

|

|

|

| T: | The T: drive is used for temporary storage and file sharing. T: is not backed up, so save copies elsewhere. |

|

||

| U: |

The U: drive is used for personal file storage. It can only be accessed by you and is backed up regularly. It can be self-service restored following these UW-IT directions

The U: drive is the same as your UW UDrive. |

|

|

Be careful not to permanently store data on the desktop, in the C: drive, or in the Downloads folder. These locations are regularly cleared of files to free up space, so any files lost may be impossible to recover.

Normally, one has to be on campus to view the electronic resources offered by UW Libraries. However, CSDE allows remote access through CSDE’s “Windows Terminal Server,” which all CSDE Affiliates are invited to use. Once you are logged into the server, it allows you to search the UW Libraries systems and use their resources as if you were on campus.

To find a journal article in the UW database, follow the steps below.

- Sign into the terminal server using your CSDE account

- Open a web browser and go to www.lib.washington.edu/

- Enter the journal title into the search box at “UW WorldCat: Search UW Libraries and beyond” on the library homepage

- Journal availability information will be displayed. Use whichever database you would like. You can then search the database for your articles by title/author/keyword/issue/etc.

CSDE computer users may request a CSDE project folder in the R: drive to share data with other users by filling out our Project Folder Request form. We do our best to set up the folder that same day but sometimes it may take longer or we may need to get more information from the requesting user.

This project folder will reside on a CSDE network file server and will therefore be backed up daily. In addition, we’ll configure it in such a way that only the members of your group have access to the folder.

Please note that users must request a folder inside of the R: drive in order to begin storing files. Any unauthorized files that are stored inside of the R: drive may be deleted without advanced notice. Also, project folders must be requested with at least 2 members. We do not allow a project folder to be created for just a single user.

Users may not change permissions or inheritance to their project folders; this includes granting access to another user.

If you would like to grant access to someone, email csde_help @u.washington.edu, including the project folder name and the usernames you would like to add. In the event that the requested user lacks a CSDE account, we will ask that they first apply for an account online.

Simulation Cluster

CSDE’s Simulation Cluster is a group of 2 Dell C6320 PowerEdge Windows Server 2025 servers featuring simulation-specific software intended for computationally intensive work. The Sim Cluster is available for use by CSDE affiliates and students from all CSDE training program partner departments.

To start using the the Simulation Clusters, you must first submit a request to gain access.

The Sim Cluster is rebooted on the last Friday of each month and is therefore offline from 3:00 AM to 10:00 AM PST while we apply updates. Please plan your work accordingly.

- All off-campus users must connect their local system to the Husky On-Net VPN.

Faculty, staff, and students, see: Set up Husky On-Net VPN.

Non-UW members, see: Connecting to CSDE’s HON-D Departmental VPN - Connect to either of the two Sim Cluster nodes (e.g., sim1.csde.washington.edu or sim2.csde.washington.edu) using Remote Desktop Connection.

- Log in with your NetID username and password.

To start a new session for an additional simulation job, simply open another Remote Desktop Connection window to a different sim node (e.g., sim2.csde.washington.edu).

To resume a job that’s currently running, reconnect to it by logging back in directly to its node. (The My Computer icon on your session’s desktop will indicate which cluster node you’re using; see the image to the right.) For example, if you disconnect from SIM2, your session would continue running there. To reenter and check the status of jobs, you need to use Remote Desktop Connection to connect back to the Sim node with your job.

To resume a job that’s currently running, reconnect to it by logging back in directly to its node. (The My Computer icon on your session’s desktop will indicate which cluster node you’re using; see the image to the right.) For example, if you disconnect from SIM2, your session would continue running there. To reenter and check the status of jobs, you need to use Remote Desktop Connection to connect back to the Sim node with your job.

- List of programs available on CSDE Sim cluster

- Each cluster node is a Dell PowerEdge M640 or C6320 Blade server with 16 2.00GHz Intel Xeon CPUs and 384GB or more RAM. They run Windows Server 2025 (64-bit) DataCenter Edition.

- Information on the H: and R: drives is backed up to tape, but like the Terminal servers, files on the Desktop and C: drive of the Sim Cluster are not backed up. The Desktop and C: drive are wiped whenever the sim nodes are re-imaged, so make sure you store important data elsewhere. Though we will do our best to minimize disruptions, CSDE is not responsible for data lost due to maintenance reboots or unexpected crashes.

- You are expected to compose a brief note and endorsement for future funding proposals.

- Do not overload the cluster with too many computationally intensive jobs—it prevents others from using the resource.

- If running multiple jobs, start them on several nodes rather than on a single one. Please do not run jobs on more than three sim nodes.

- Report any problems with the sim nodes or software to csde_help@uw.edu.

Click on the Sim node to find out the current system resource information. Please notice that the links can be opened only if you run your browser on a campus network

(It is advisable to use a Sim node that has lower % of Memory and CPU Usage):

SIM1

SIM2

SIM3

SIM4

SIM5

SIM6

SIM7

SIM8

SIM9

SIM10

–>